3 learnings from the deep learning for sentiment analysis workshop

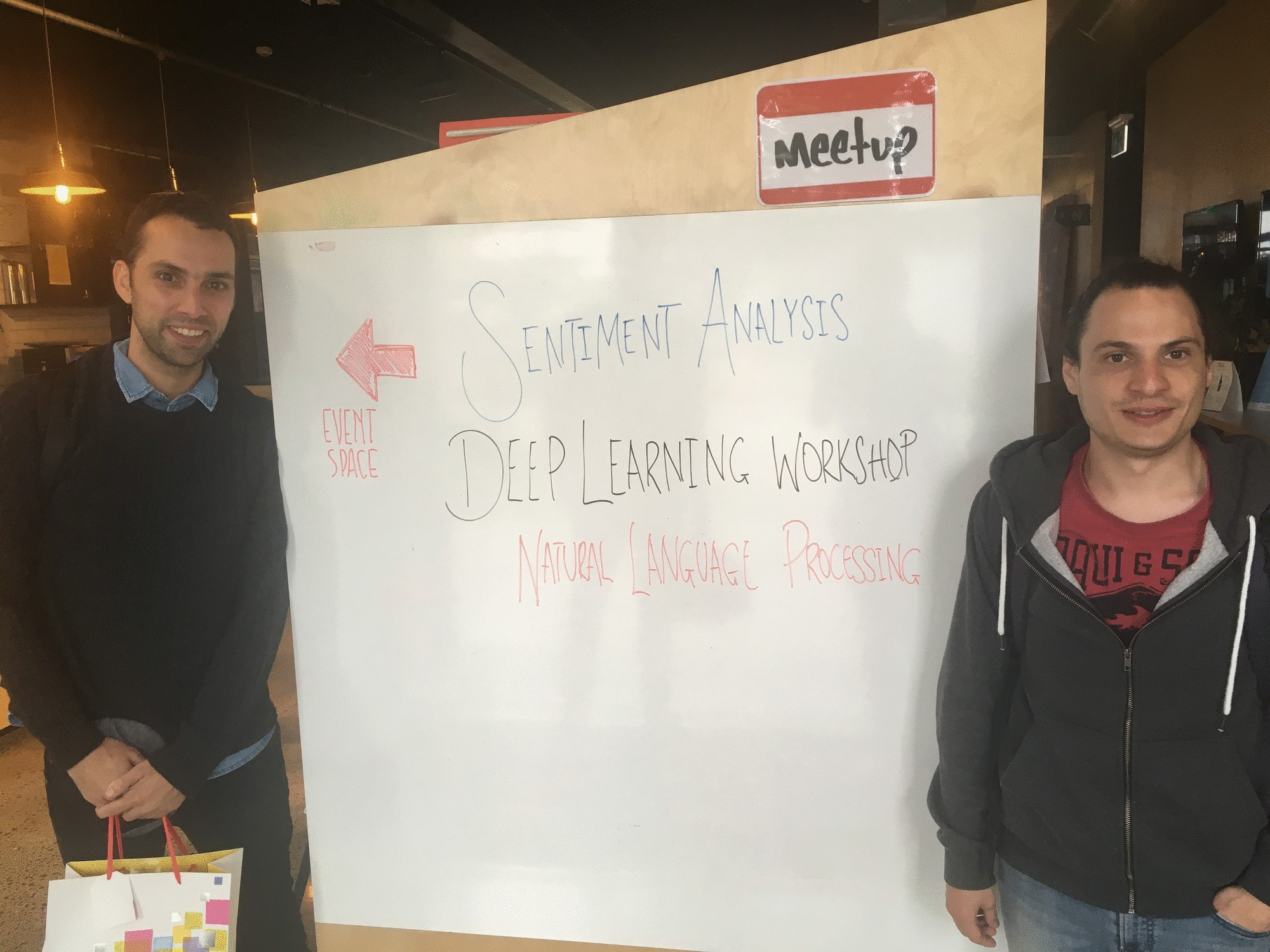

A couple of years ago, our team at Thematic organized and sponsored a free Deep Learning for Sentiment Analysis workshop led by Dr. Felipe Bravo-Marquez. Despite being announced on short notice, it was fully booked within 24 hours, with over 40 attendees eager to dive into the world of NLP and deep learning.

Felipe, alongside co-host Dr. Edison Marrese, both postdoctoral researchers specializing in NLP, delivered an incredibly insightful session. They broke down complex concepts, covering distributional word similarity, word embeddings, convolutional and recurrent neural networks, and the mathematical foundations behind these approaches.

Beyond theory, they also shared practical insights on models, experimental techniques, and real-world projects. While the workshop was condensed from a three-day course into just three hours, they managed to pack in the most valuable takeaways.

Though it’s been some time since that session, the knowledge shared remains as relevant as ever, making it worth revisiting today.

Here are my 3 key learnings:

1. Easily use and adopt models created by other researchers instead of creating your own

Whether you're working on sentiment analysis or other text analytics techniques, creating a deep learning model requires a huge amount of work on pulling together the right kind and the right amount of data, setting up the learning environment, and running the algorithms on a server.

The results are often published on GitHub and other websites. Models, shared as part of the results, can be adopted for similar use-cases, often without the need of retraining.

“Fine-tuning the network with your own data is usually the best approach” – Felipe Bravo

For example, the winning solution in a recent sentiment analysis competition was an ensemble of deep learning models trained on various data representations.

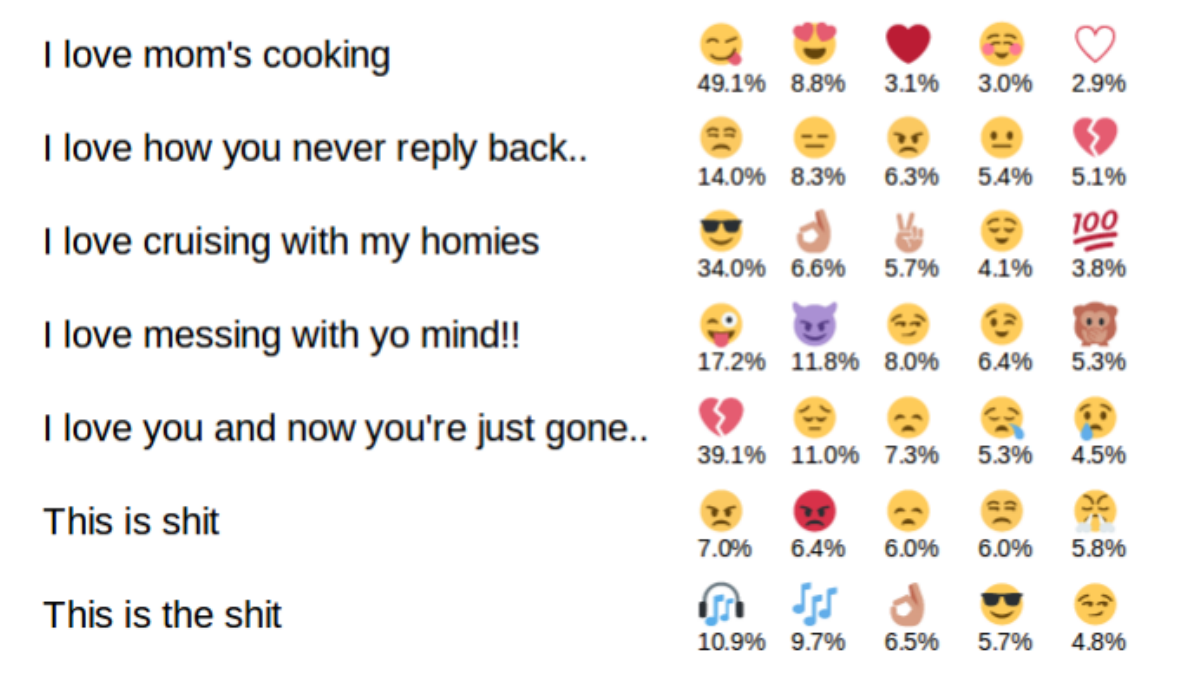

One model that we can’t wait to experiment with at Thematic is the DeepEmoji project, where the specific sentiment of phrases such as “this movie was shit” and “this movie was the shit” could be discovered.

Imagine doing social media sentiment analysis with the capability of understanding emojis: we can capture emotions expressed not by words.

2. Big trend to use character-based NLP models

Typically, natural language processing (NLP) models are trained by splitting text into sequences of words. This works particularly well for the English language.

Compared to languages like Finnish or Russian it has a very small number of suffixes and endings. Compared to German, words aren’t typically combined to form new words. Compared to Japanese and Chinese, words are always separated by whitespaces.

English is one of the easiest languages to analyze.

Interestingly, deep learning models trained on character sequences don’t rely on language-specific methods for dealing with special language characteristics, such as tokenization for splitting text into words.

Because the English language usually has the greatest amount of training data, this means that other languages can now benefit from character based models.

Similarly, in the 90s, one of the first usable language detection algorithms used the most common character sequence patterns to detect a language. There are hidden linguistic properties of languages that most people aren’t aware of, but deep learning models can capture them.

3. Customer feedback analysis is one of the hardest NLP tasks

Our post-event discussions with Felipe and Edison about what we do at Thematic were thought-provoking. In the academic world, thematic analysis of people’s reviews is called “aspect detection.”.

For example, “room cleanliness," “breakfast quality”, “location”, “price”, “check out” are all aspects of hotel reviews. Hotel owners need to know which sentiment is attached to each aspect.

At Thematic, we deal with a variety of businesses, and each one has their unique set of aspects. In fact, our customers don’t just want to know the sentiment of top-level aspects; they need an in-depth understanding of what is actually driving that sentiment.

We solve this problem by automatically extracting up to hundreds of aspects or themes that are the most common in customer responses. The same algorithm automatically groups these themes into broader categories for easy analysis.

When we explained this process, Felipe and Edison agreed that it’s an extremely hard task to solve across a variety of datasets.

Because most businesses don’t have training data, deep learning algorithms can’t easily help, and an approach specifically crafted for this task (as we’ve done at Thematic) is required.

In the academic world, researchers tend to compete on clearly defined tasks and datasets that can be shared. While it’s possible to design a task around hotel reviews, a cross-domain approach is much harder, particularly given how subjective this task is.

I believe that the best ideas come from such interactions, and I’m sure the workshop attendees have benefited from the knowledge shared at this workshop. A huge thanks to GridAKL for sponsoring the venue and Felipe and Edison for running it.

Find out how Thematic can help you. Try Thematic now!

Stay up to date with the latest

Join the newsletter to receive the latest updates in your inbox.