What GPT-3 means for Customer Feedback Analysis

What is GPT-3 exactly? And what does this new AI model mean for customer feedback analysis?

The latest exciting news in AI is a new language model, called GPT-3 by OpenAI. OpenAI is a research company co-founded by Elon Musk and Sam Altman. Sam was a president of YCombinator, the startup accelerator Thematic completed.

So what is GPT-3 exactly? How good is this model at analyzing customer feedback? And what does it mean for anyone working with feedback?

In summary: We tested GPT-3 on a dataset of 250 pieces of feedback we knew intimately well. The output produced was suggestions the model learned from things people said on the Web, instead of what was actually in the feedback.

What is GPT-3 and why did it go viral?

What is a language model? A language model is created by analyzing a large body of text. The model records word frequencies: on their own, and in different contexts. This means that a language model can determine how similar two words are. It can also predict which word should follow the next one. It can also generate human-like text.

Researchers have been creating language models for over a century. Language models are core elements of most Natural Language Processing algorithms. We use them at Thematic.

The quality of the language model depends on the amount of data it has seen. GPT-3 is the largest model out there as of mid 2020. It is made up of 175 billion parameters (random subset of the Web). This cost OpenAI an estimate of $12M!

OpenAI released the GPT-3 Playground, an online environment for testing the model. Give it a short prompt and GPT-3 generates an answer. It is tricky to create these prompts. But once you start experimenting, you can generate some impressive examples. Here are some examples of what GPT-3 can do:

- Write responses to philosophical essays

- Outline its plan for starting a new religion

- Create fiction in any writing style

- Generate programming code from a natural language description

As an NLP researcher myself, I was skeptical. Language models understand how language works but not its meaning. And yet, when I saw GPT-3’s ability to summarize and abstract, my curiosity was piqued.

Example, after seeing a shopping list with 5 items, the model concluded that its owner wants to bake bread.

One more GPT-3 example. It's able to deduct intent based on four-five product titles. Amazed by the baking bread example. pic.twitter.com/5UVj1P0Nml

— rspineanu (@rspineanu) July 19, 2020

Why apply GPT-3 on customer feedback?

When we analyze customer feedback, we summarize and abstract many opinions into insights.

At Thematic, each visualization or chart answers a question like:

- Why are users unhappy?

- Why did my score drop this month?

- Or what do users say about a particular topic?

An analyst will add a natural language summary for each of these charts in their report. We’ve done this ourselves with our insights reports on Waze vs. Google and the Zoom boom.

What if GPT-3 could create such insights reports for us? What if you could even ask a question about what matters to your customers, and it would give you the answers?

Not everyone can try GPT-3 out, you have to apply! But luckily my friend got access and he let me test it on a few datasets containing customer feedback. Here is what we found.

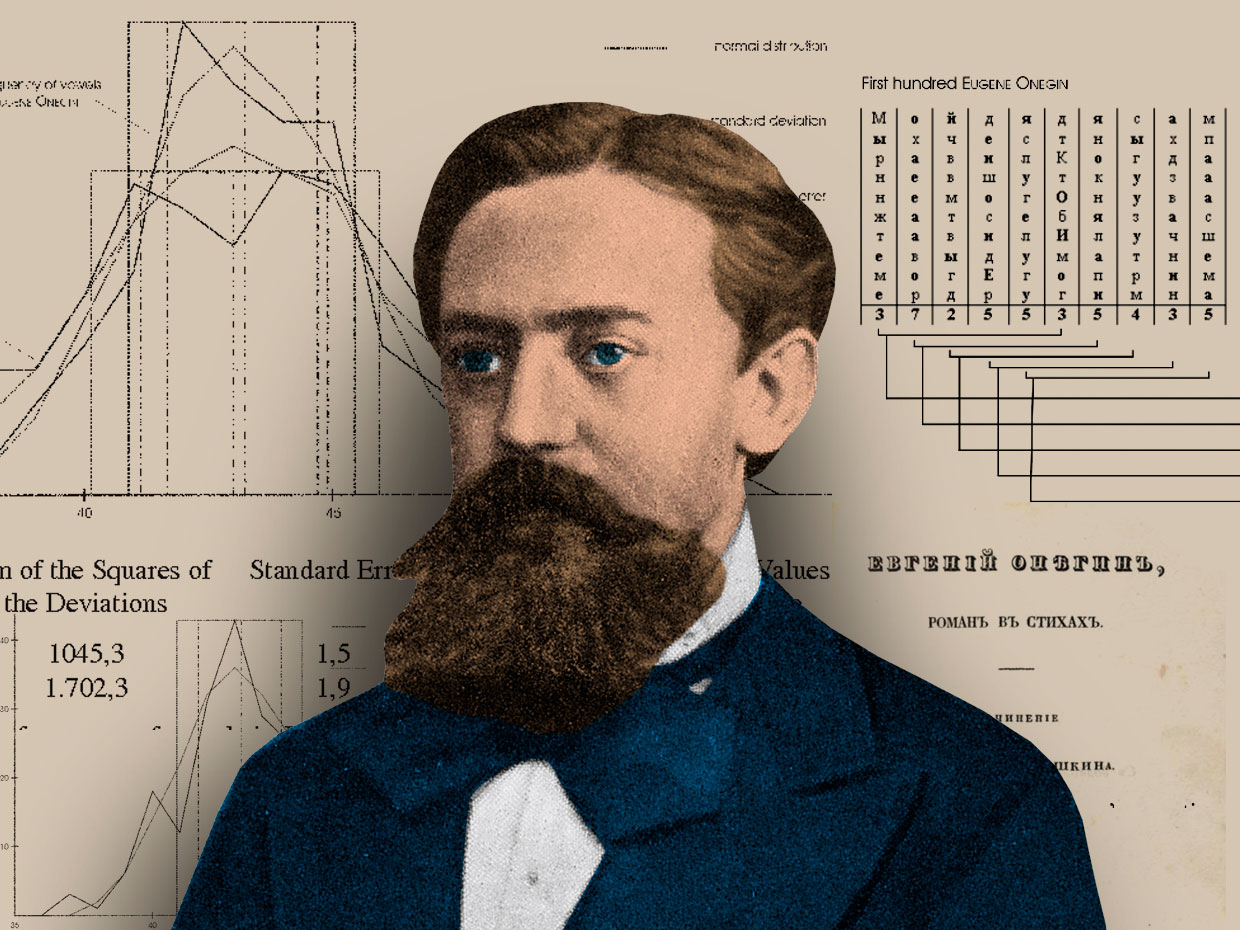

How can GPT-3 summarize feedback?

Unfortunately, OpenAI’s playground is limiting the amount of data a prompt can take. But after iterating about a dozen times, we started seeing interesting results.

Here is an example of a GPT-3’s summary of some generic eCommerce company’s feedback:

GPT-3 did a great job at finding the correct aspects in the review:

Staff, website, shipping, customer service, pricing and product.

It also identified multi-word phrases such as “easy to reach”. But, it did a poor job at something that should be basic: treating “excellent”, “great”, and “good” as synonyms. In the context of reviews, it’s not as helpful to know these nuances.

Our next experiment was to feed GPT-3 students' feedback for their business school. This time we asked GPT-3 to identify the main complaints.

A pretty good and logical summary! It looks impressive, but it's factually incorrect.

At least 10 students had issues with the exams, but as you can see here, exams aren't mentioned among the top 5. Instead individualism of student is one of the main complaints, which was only mentioned once.

In our prior evaluation of the same reviews, 4 people and Thematic identified the top 5 complaints made by the students. Among these was the issue of exam timing. GPT-3 missed it, so we tried to help the model a little. What were the complaints about exams?

Again, we knew this dataset intimately and saw that GPT-3's output was inaccurate. It both invented complaints and missed actual complaints.

- GPT-3 said that exams need to be better prepared (and more serious?). Whereas in fact, students said that they want exams spread out, so that they have time to prepare.

- GPT-3 said that exams need to be better explained, because they are not well understood; that they are not in line with the rest of the curriculum. But none of the students mentioned this.

- GPT-3's points 2, 4 and 5 are kind of overlapping. It completely missed the issue of spreading exams more evenly, or announcing them earlier, which was the core complaints of the students, according to both people and Thematic.

So, instead of summarizing the text in the prompt, GPT-3 created a coherent text inspired by it. It suggested improvements that random people mentioned on the Web! And guess what, the Playground demo has a parameter that could make the model even more creative.

It is unlikely that data-driven executives will need creative interpretation of customer feedback. So, in its current form, applying GPT-3 on customer feedback doesn’t make sense.

Is there AI that can create customer feedback reports?

And yet, an auto-generated report of customer feedback is the holy grail. This report will answer your questions: the things you want to know. It will also shine light on the unknown unknowns: the things you don’t know but need to know. The insights.

A language model generated on third-party data like GPT-3 won’t work, but what will? Is there a solution?

Robert Dale wrote a great state-of-the-art review of Natural Language Generation approaches. Pioneering companies in this space have been using smart templates populated with data. But today, the best solutions use neural language models. These models are like GPT-3 but highly specialized, or as Dale put it “on a tight leash”. The approach is already adopted by Google and Microsoft in their products. One example which I use every day and love for helping me be efficient is the smart compose by Gmail.

In the future, we’ll indeed see more and more auto-generated customer feedback reports. Well, not quite auto-generated, but rather using augmented intelligence. Human and AI will be co-authoring the reports in tandem. The AI will help break the writer’s block by providing a first summary of the insight. The human will refine the results before sharing it with the team. Specialized solutions will still use some templates to kick start the analysis.

So what about GPT-3?

MIT technology review concludes that this shockingly good and completely mindless model is an achievement!

It has many new uses, both good and bad.

From powering better chat-bots and helping people code... to powering misinformation bots, and helping kids cheat on their homework.

But not in customer feedback analysis.

Stay up to date with the latest

Join the newsletter to receive the latest updates in your inbox.