What is Deep Learning? A brief history, and what it means for the future

At Thematic, we have a dedicated research team that is always innovating to bring you the most accurate sentiment and thematic analysis. Solving this difficult task requires in-depth understanding of both the problem of customer feedback analysis and the relevant advances in technology.

This article is a glimpse into one of the technologies we use here, called Deep Learning. Knowing the technical details of Deep Learning is not required to be able to see the power of its capabilities and what it will bring in the future.

Deep Learning: What is it?

Let’s start by getting the technical details out of the way...

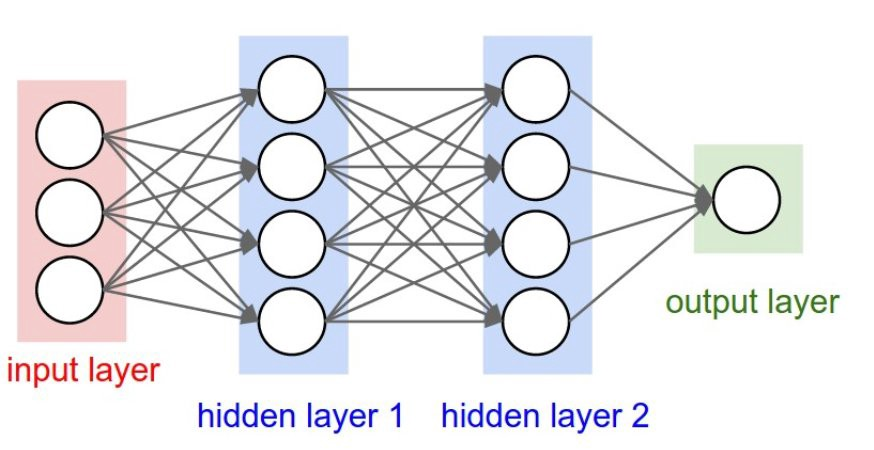

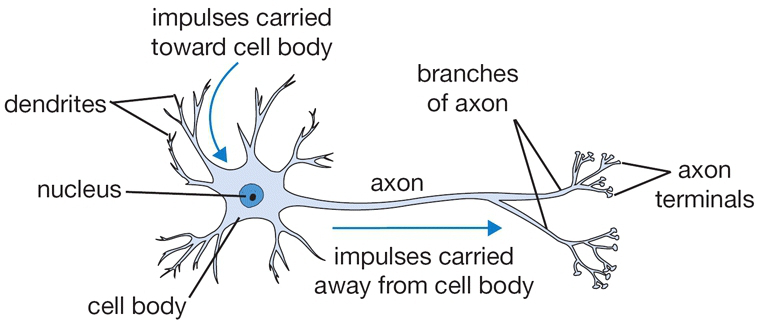

Deep Learning is a form of Machine Learning. It is known as 'Deep' Learning because it contains many layers of neurons. A neuron within a Deep Learning network is similar to a neuron of the human brain - another name for Deep Learning is 'Artificial Neural Networks'.

A Deep Learning model is trained by setting an objective for what the model wants to learn, then optimizing the model’s parameters to improve performance. This is different to traditional Machine Learning, which requires manual crafting and selecting of features (properties of data that the algorithm should examine) which takes time and is not always effective.

Understanding how Deep Learning works is not important for this article, but if you would like a crash course on the details, have a look at this video: But what is a Neural Network?

The History of Deep Learning

Below is a timeline of some of the important events in the history of Deep Learning. They are by no means exhaustive, but they are chosen as they help illustrate how Deep Learning arrived to where it is today.

Deep Learning Timeline:

- 1958: Perceptron (Simple version of deep learning) Discovered

- 1979: Convolutional Neural Network Invented (dominant deep learning architecture for image recognition in the 2010s)

- 1982: Recurrent Neural Network Invented (Sequence processing used for NLP)

- ❄️ AI WINTER ❄️

- 2009: ImageNet Introduced

- 2010: Kaggle Launched

- 2017: Transformer architecture invented

- 2022: ChatGPT goes live

The first three events show when some of the techniques that are used today were invented. At the time, these techniques were unable to be used for real world applications. This was largely because computing power was hard to come by and some of the details that make these techniques effective were yet to be discovered. An interesting point to keep in mind is that these technologies are not new ideas, but applying them effectively to real world problems is relatively new.

While the exact date of the beginning and end of the AI winter is debatable, there is no question that there was an AI winter, and it was icy. Then, sometime around 2010, the frost began to melt. The reason I have included ImageNet and Kaggle on this timeline is that they were instrumental in the recent progress of Deep Learning, and the defrosting of the AI winter.

What is ImageNet and how did it help to advance Deep Learning?

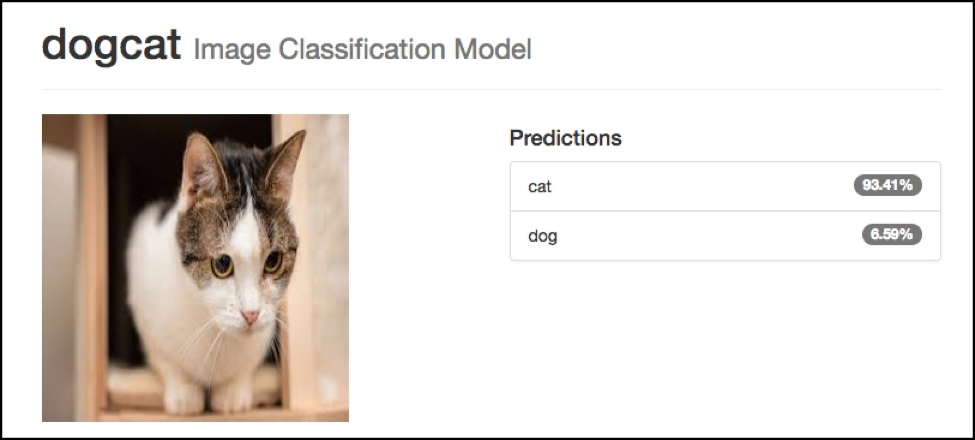

ImageNet is a large dataset which is used for image classification. Image classification is the problem of being given an image, then having to label it with which predefined category it belongs to. An example of this is a classifier that must determine if each image is a cat or if it is a dog.

Another example of this, (especially relevant to those who have seen the TV show Silicon Valley) is the “hot dog / not a hotdog” classifier:

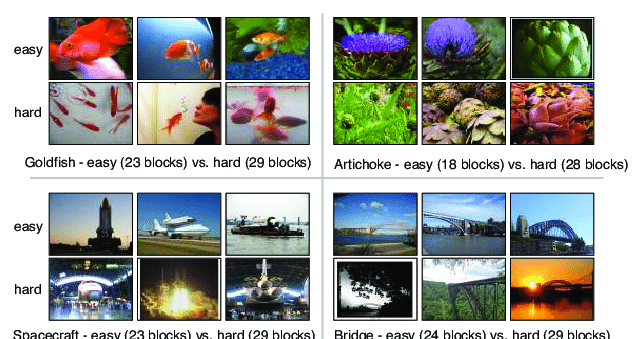

ImageNet takes this task of image classification but extends it to over 20,000 different classes, with an average of more than 500 examples of images from each class. Below are some real examples from the dataset.

What effect has ImageNet had on Deep Learning and AI?

- Provided a measure to benchmark how good a technique is

- Provided an arena for competition between different approaches

Much like how the UFC (Ultimate Fighting Championship) enabled different styles of fighting and martial arts to compete against each other, ImageNet has meant that different techniques of image recognition can be compared and the ones that work the best can rise to the top.

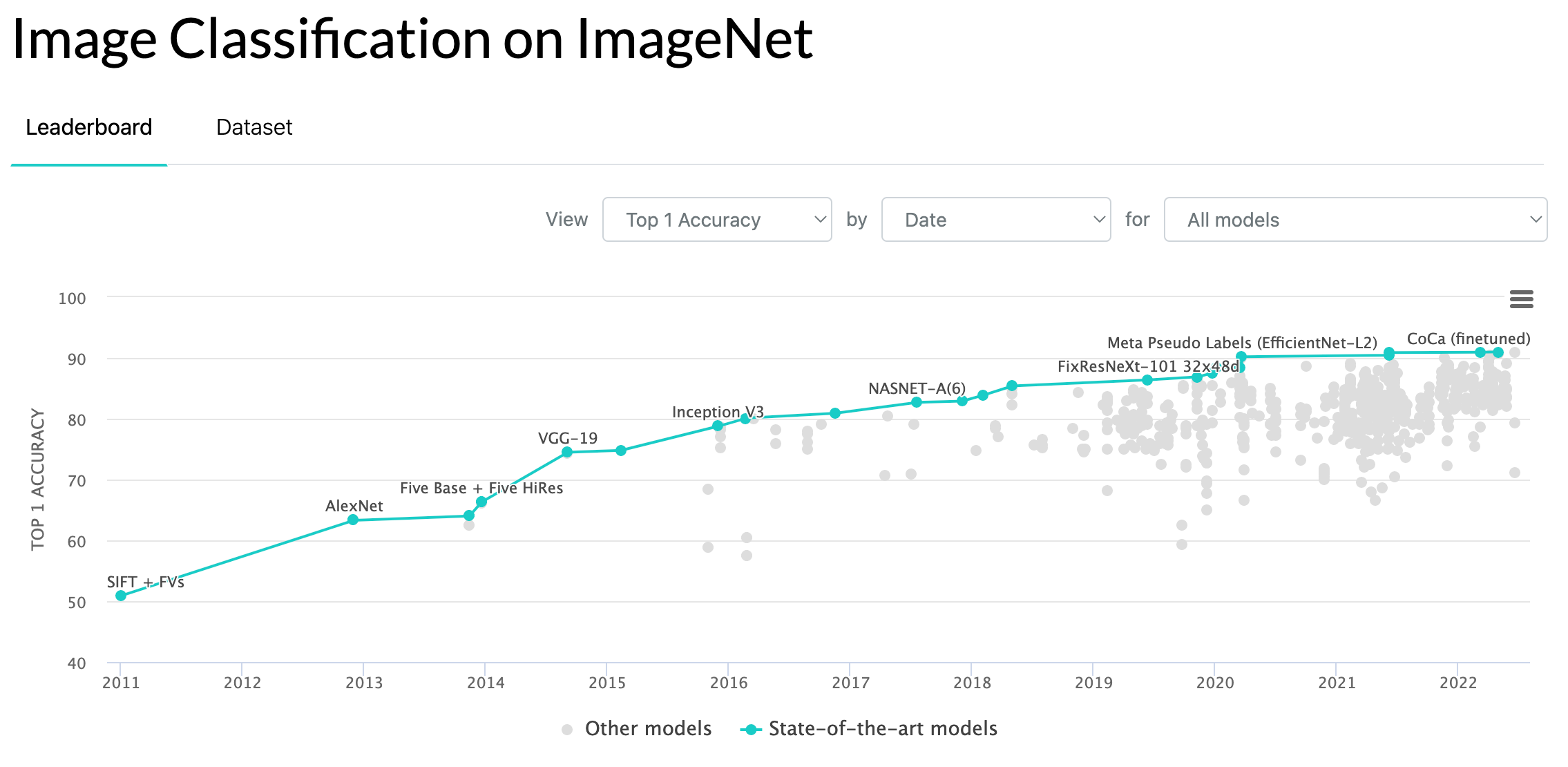

Here is a chart of the state of the art accuracy scores over the last ten years:

Over the last decade, accuracy on this task has improved from getting around half of the predictions correct, to getting 9 out of 10 correct, which is widely accepted as beyond human accuracy.

The advancements along the way represent discoveries of small tweaks to Neural Networks which allow them to work much better. It is important to note that the performance on ImageNet is still increasing at a rapid pace.

What is Kaggle?

In the timeline, we also have the founding of Kaggle in 2010. Kaggle is a platform which hosts competitions which are similar to ImageNet, but over a wide variety of applications, from images, to tabular data, language and many areas. Kaggle has had a similar driving force effect on the progress of Deep Learning.

What Natural Language Processing (NLP) shares with Image Processing

What is Natural Language Processing?

Natural Language Processing (NLP) is the intersection of Computer Science and Linguistics. For example, could an algorithm read this sentence and understand what it means? Well, current NLP technology is able to extract themes from sentences and deliver humans insight into what is being said. Perfect for analyzing large amounts of text!

At Thematic, we use NLP tech to analyze and find insights in large amounts of customer feedback for our clients.

Why is all of this information about image recognition and ImageNet relevant when at Thematic we live and breathe NLP?

Firstly, ImageNet has been the pivotal dataset when it comes to the progress of Deep Learning so it is a good one to look at. Secondly, progress with NLP tasks has been following a similar path to progress with images although it is around 2 or 3 years behind. This is because most of the techniques discovered for images are transferable to be used with language (and vice versa). NLP is lagging by a few years because in some ways it is more difficult to work with language.

You can think about both images and language in terms of the compression of information. For example, you can have a high resolution image and a longer sentence representing the same thing as in a low resolution image or a short sentence. Because of all of the shared context we have around words and meaning, we are able to compress a lot of language information into a smaller space (a sentence).

This contrasts with an image, if we look at a very small image like an icon on the iPhone, which is 120x120 pixels. We require 14,400 pixels to represent something which we could describe in one, or very few words.

LLMs and Deep Learning

How relevant is deep learning today given that LLMs like ChatGPT are so prevalent and powerful? Very relevant!

LLMs are trained using deep learning algorithms. The deep learning architectures continue to evolve with architecture changes, most recently with the introduction of The Transformer in 2017. And with smaller tweaks to the algorithm. But the core idea remains the same.

The big change with LLM is that the models are so large, and the training data corpus is also so large that these algorithms are able to give rise to an emergent intelligence, which sometimes makes you feel as if you are speaking to a very knowledgeable person.

Narrow vs General Intelligence: Will AI take over the world?

Many people, including smart people and celebrities, are concerned with the dangers that AI brings. People who actually work in AI, don’t tend to share these fears, and here's why:

There is a difference between General Intelligence (the ability to be successful across a wide variety of tasks) and the ability to automate the processing of a very narrow task (such as identifying if the image contains a cat or a dog). Up to about the introduction of ChatGPT and LLMs, Deep Learning efforts mostly consisted of getting near human or above human performance on narrow tasks. For example “Is there a stop sign in this image?”

But since LLMs have come on the scene, the models have increasingly become more general. You can send them an image and ask if it is of a cat or dog, you can copy a scientific research paper and ask it to provide a summary. These models are also multi-modal, meaning that you can type text to them, you can voice record to them and you can send them images, likewise, they are capable of responding with text, audio and images.

If you’d like to learn more about Deep Learning for stop signs and autonomous vehicles I recommend watching this video AI for full self driving.

Conclusion

Deep Learning continues to advance at a rapid pace. This makes it an interesting (and at times difficult to keep up with) field to follow. While one day, these techniques are likely to be surpassed by something new, they are currently the best performing methods for a lot of tasks and are showing no signs of reaching their limits in performance. And as performance increases, we are going to see Deep Learning be involved in more parts of the world we live in.

For Thematic, this means that our ability to determine the sentiment surrounding a particular sentence, part of a sentence or a theme, is continuously improving. By investing in R&D in this field, we are helping more and more companies to discover 'unknown unknowns' in their data and provide an accurate view of what their customers want.

Stay up to date with the latest

Join the newsletter to receive the latest updates in your inbox.